On November 3-4, 2023, the Neely Center hosted the Psychology of Technology Institute’s 7th annual New Directions in Research conference. The conference theme this year was “The Psychology of AI Value Alignment”. We are thrilled to share that the event brought together over 130 participants from across the world and featured over 20 presentations covering the latest research on topics such as “Using Large Language Models to Help People be Their ‘Best’ Selves”, “Integrating AI and Democracy”, “Can Gen AI Help End HIV?”, and “When and Why Do Chatbots Satisfy the Human Need for Social Connection?”. Executives from Ernst & Young, Khan Academy, Healthvana, AIDS Healthcare Foundation, United Nations, Common Sense Media, Pinterest, and theAmerican Psychological Association discussed the various ways AI could be implemented to improve technology’s impact on society.

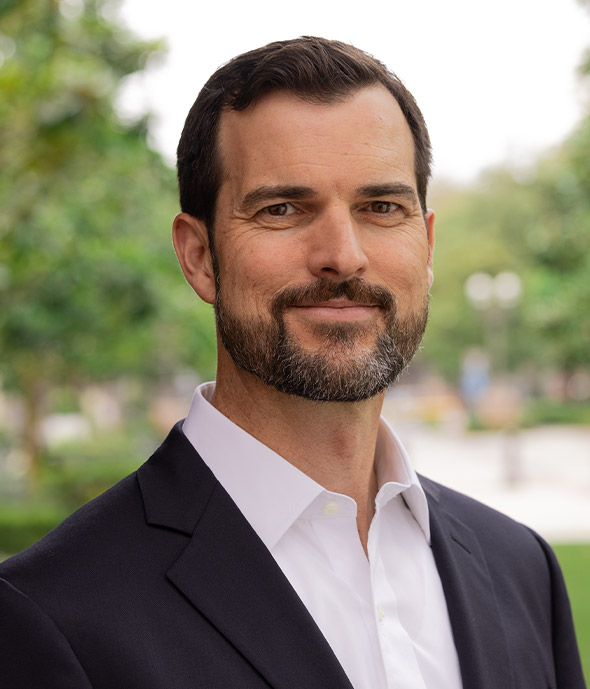

“There was terrific energy from start to finish,” said Nathanael Fast, executive director of the Neely Center. “Over the years, we’ve found that technologists and behavioral scientists are eager to learn from each other, and when these kinds of cross-disciplinary conversations happen early in the design process we can ultimately build better and safer products.”

Fast co-organized the conference with Ravi Iyer, managing director of the Neely Center, and Juliana Schroeder, Harold Furst Chair in Management Philosophy and Value at UC Berkeley Haas School of Business.